cerebras is now a public company

Thank you to the customers, partners, and team who made this possible. The world runs faster on Cerebras.

Just the facts

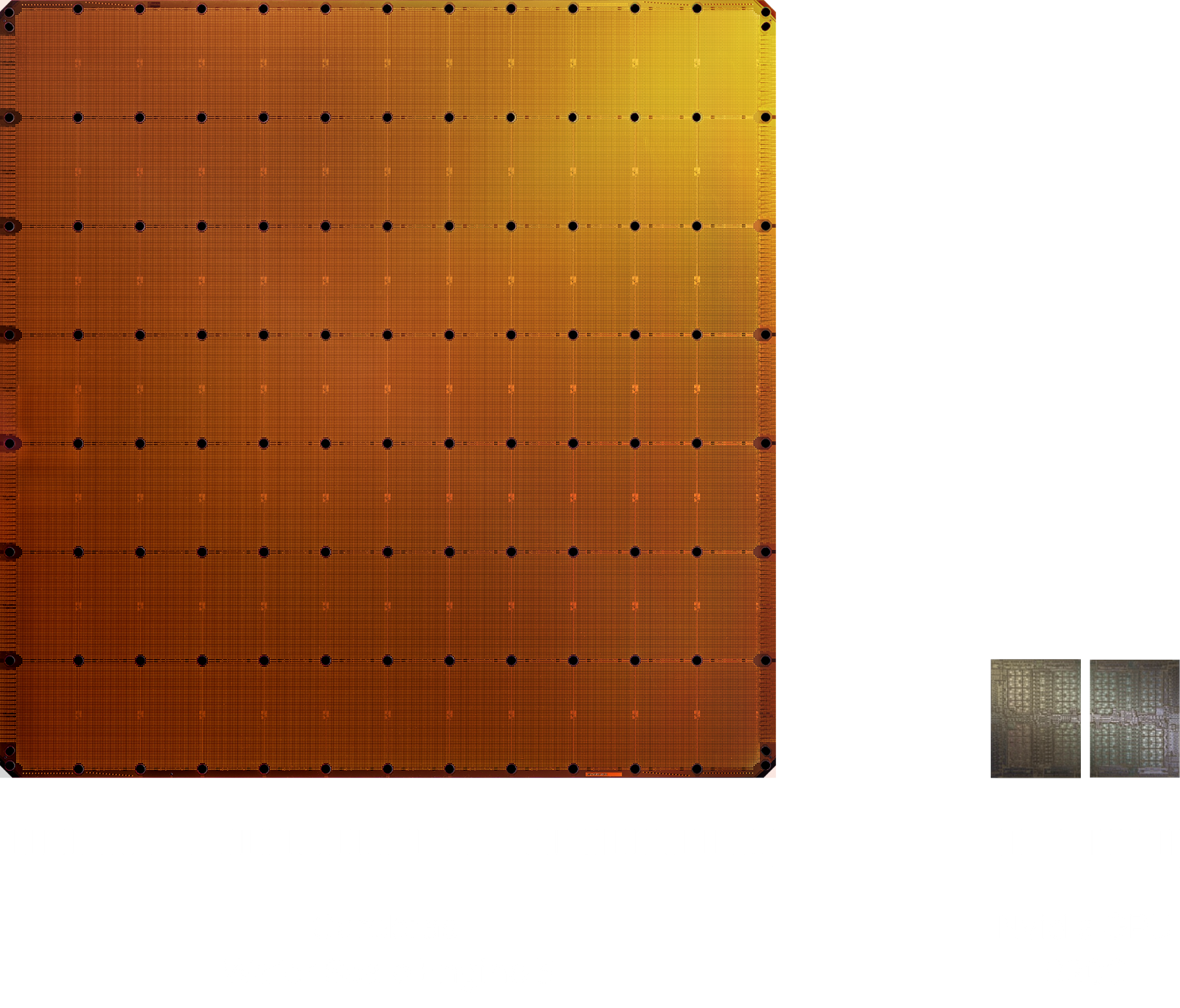

Cerebras builds the world's fastest AI inference platform, powered by the wafer-scale engine — the largest chip ever made — delivering up to 15x faster inference than GPUs.

NASDAQ

Stock Exchange

CBRS

Ticker Symbol

May 14, 2026

Initial Public Offereing

Andrew Feldman

Co-founder & CEO

Cerebras Systems

Sam Altman

CEO

OpenAI

Jack Dorsey

CEO

Block

Andrew Feldman

Co-founder & CEO

Cerebras Systems

Sam Altman

CEO

OpenAI

Jack Dorsey

CEO

Block

Customer Stories

We believe that Cerebras is the best high-speed inference offering in the world right now, and it's hard to overstate how important high-speed inference is. Fast inference has emerged as this extremely important category, and we're thrilled to get to offer this. The feedback from customers has been incredible.

By partnering with Cerebras, we are integrating cutting-edge AI infrastructure […] that allows us to deliver the unprecedented speed, most accurate and relevant insights available – helping our customers make smarter decisions with confidence.

With Cerebras’ inference speed, GSK is developing innovative AI applications, such as intelligent research agents, that will fundamentally improve the productivity of our researchers and drug discovery process.